The rules around AI in applications have changed faster than most platforms have kept up with. Many programs that welcomed AI assistance two years ago now explicitly prohibit it. Others permit it, but only with disclosure. A few have built detection systems that screen submissions before a human ever reads them.

This post explains how we think about that landscape, what AI does on this platform, and what it deliberately does not do so you can use the platform with confidence that it is helping you, not putting you at risk.

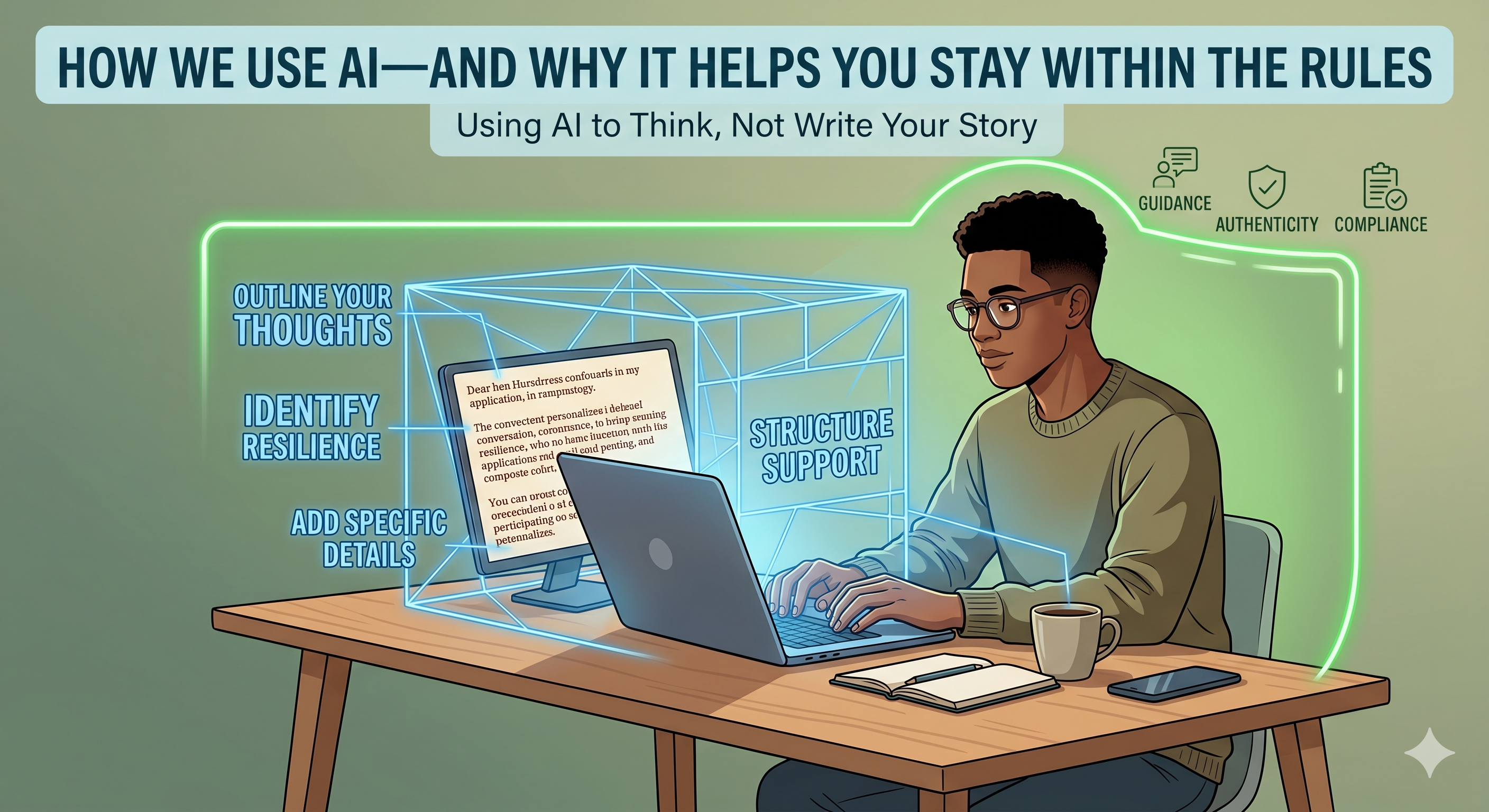

Where AI helps you, and where it shouldn't

There is a useful line that we try to hold to.

AI helps you when it makes you a better thinker about your own story when it points out that an experience you mentioned in passing is actually the strongest evidence of resilience in your application, or when it asks you a question that pulls out a detail you forgot to include. That kind of help leaves your work more yours, not less.

AI starts to hurt you when it speaks for you instead of with you when it puts polished words into your application that don't sound like you, when it generates content you would not have written and could not defend if asked about it later, or when it produces something a reviewer can detect as machine-written.

The first kind of help respects you. The second kind replaces you. We try hard to design only the first kind into this platform.

What our AI does

Our AI reads your stories carefully and identifies the behavioral patterns research has linked to long-term success. It generates the dimensional scores you see on your profile. It flags where your evidence is strong and where it is thin. It reads opportunity descriptions and finds the ones that match your actual profile, not the ones that match a generic image of who you might be.

It also helps you think more clearly about what you've already done. When you describe an experience, it can ask you the kind of questions a thoughtful mentor would ask: what specifically did you do, what was the outcome, who else was affected. The goal is to draw out what you already know about yourself, not to manufacture new content.

What our AI deliberately does not do

We make several specific choices that some other platforms do not.

We do not generate finished application content for you to submit unedited. When you use any writing assistance feature on the platform, it produces scaffolding outlines, structural suggestions, prompts to think about by default. If you explicitly ask for a draft, we mark it clearly as a draft for your editing, with a notice telling you that submitting it unedited may violate the policies of programs you are applying to.

We do not hide that AI was involved. When AI helped produce text, that fact is recorded and visible to you. You always know which parts of your work were assisted and which were entirely your own. This is the information you need if any program asks you to disclose AI use.

We do not pretend to do things we cannot reliably do. Our AI cannot guarantee a submission will pass an AI-detection system. It cannot tell you that any specific program will accept assistance from any specific tool. Those rules change quickly and vary by institution. What we can do is help you stay on the safer side of the line, and tell you clearly when something is your responsibility to verify directly with the program.

How we help you stay within the rules

Three concrete things you can do, and the platform supports each one.

When you write any application content with AI help, rewrite it in your own voice before you submit it anywhere. A useful test: if a reviewer called you tomorrow and asked you to explain a paragraph from your application, could you do it without re-reading? If not, the paragraph isn't yours yet.

When a program asks whether you used AI assistance, answer honestly. Most programs that ask are not trying to trap you they want to know how to evaluate your work. A clear, specific disclosure ("I used a platform to outline my structure, then wrote the content myself") is almost always treated more favorably than a denial that turns out not to be true.

When you are unsure what a specific program allows, check the program's website before you submit. Policies vary. Many publish their rules clearly. When they do not, contacting the program directly is the safest move and we encourage you to do so.

Why this matters more than convenience

The temptation with AI is to use it to save time. The platforms that survive the next few years will be the ones that help students use AI to think better, not to write faster. We are trying to be one of those platforms.

Your application is a record of who you are and what you have done. The point is not to make it faster to produce. The point is to make it more accurate to the truth of your story. AI can help with that when it is used carefully, by you, with your judgment intact.

That is the kind of partnership we are trying to build.